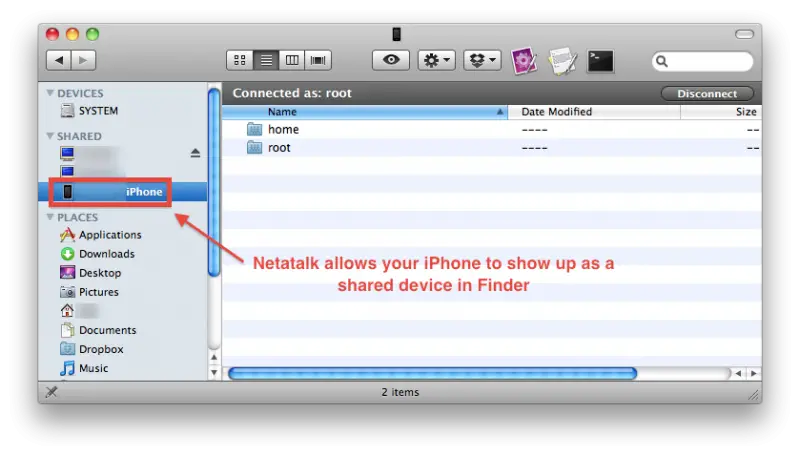

All the references that I read mention the degenerate case after the formula for the diagonalization of the Hamiltonian, but without making an explicit connection between them. In the nearly free electron model, the diagonalization of the Hamiltonian leads to the equations: $$E_0(k,h_n)A_n \sum v_mA_$. The mark scheme answer is D) 88.3% which is the closest answer to the value I got, but why don't I get that answer? Is it an error in my calculation, the mcq answers or something else?Ĭlaudio Saspinski Asks: Is degeneracy split a particular case of Hamiltonian diagonalization in 1D nearly free electron model? (Assume that all the Zn in the coin dissolves.) The percent zinc in the coin, the student found would be. The collected gas occupied a volume of 1 L at a total pressure of 1.02 bar. The student collected the hydrogen produced over water at 27 ☌. Then the student made several scratches in the copper coating (to expose the underlying zinc) and put the scratched coin in hydrochloric acid, where the following reaction occurred between the zinc and HCl (copper remained undissolved): Is there a way of setting netatalk to read/write enable all saved/transfered files and directories Possibly setting an entire group to super-user status Thanks. In an experiment to find the percent zinc in the coin, a student determined the weight of the coin to be 3.0 g. The trouble were facing is that when an osx client saves a file to the server the permissions are automatically set up to that specific user allowing only read access by all other users. Jane902 Asks: Calculating percentage of Zn in a coinĪn old coin found in an ancient temple is composed of zinc coated with copper. However when I try the same with pytorch, it works Step_size_w2 = d_loss_and_d_w2 * self.learning_rateĭef calculate_loss(self, target, prediction): Step_size_w1 = d_loss_and_d_w1 * self.learning_rate

Gradient_1.append(d_sigmoid_and_d_logits)ĭ_loss_and_d_w1 = gradient_2_w1 * gradient_0 * gradient_1ĭ_loss_and_d_w2 = gradient_2_w2 * gradient_0 * gradient_1 Gradient_0.append(d_loss_and_d_prediction) Loss = self.calculate_loss(target, prediction)ĭ_loss_and_d_prediction = -2 * (target - prediction)ĭ_sigmoid_and_d_logits = logits * (1 - logits) # sum of squared residuals, alternatively you can use mean squared error Prediction = self.sigmoid(torch.tensor(logits)).numpy() Predictions = self.sigmoid(torch.tensor(logits))ĭef fit(self, training_inputs, targets, epochs=10000):įor training_input, target in zip(training_inputs, targets): Logits = (x1 * self.w1) (x2 * self.w2) self.bias I never had it running on Ubuntu 8.04, but it ran on Ubuntu 7.04 for a long time before getting migrated to a recent Debian Lenny 1, self.w2, self.bias = 0.01, 0.03, 0.05 My smb.conf has been working with only minor tweaks through several different versions of Samba, and currently is used with Samba 3.2.5. The maintainers of the other shares get the same permissions as everyone else when working in shared stuff, and permissions

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed